Quick Summary (Updated)

This article explains why behavior-based customer segmentation is now one of the most effective levers for improving product adoption in retail banking, and how CX, Product, and Operations teams can operationalize it at scale - without adding complexity.

So how do you know if your product usage is truly healthy or just statistically acceptable?

You’re tracking product usage more closely than ever.

Your dashboards show:

And yet, uncertainty remains.

Across U.S. retail banking, this uncertainty has become the norm. Teams have numbers, but not context.

The core issue is not lack of data. It is the lack of benchmarks that reflect real banking behavior.

Most adoption benchmarks used today are borrowed from SaaS, consumer apps, or generic digital products. They emphasize surface-level activity like logins, sessions, clicks without accounting for how banking customers actually realize value over time.

Customer experience research consistently shows that usage alone is a weak signal unless it’s interpreted through behavior, depth, and progression. Customers may log in frequently and still fail to adopt the features that drive retention, cost efficiency, or cross-product growth.

This is where benchmarks need to change.

As Jocelyn Brown, Head of Customer Success at Hypercontext, explains:

“Value realization is defined by the customer — and it is not static.”

That insight is critical for banking product usage.

A “good” adoption rate is not a fixed percentage.

A “healthy” usage pattern changes by:

Benchmarks that ignore this reality create false confidence.

They make shallow usage look like success, hide early disengagement behind averages, and delay intervention until outcomes are already compromised.

This article is designed to reset how banking teams think about product usage benchmarks.

You’ll learn:

Because in banking, benchmarks don’t exist to impress dashboards.

They exist to guide decisions before adoption stalls.

You’re not short on benchmarks. What you’re short on is benchmarks that actually mean something in banking.

When you ask, “Is our product usage good?”, the numbers you’re usually handed come from:

They look polished and sound credible. But when you apply them to retail banking, they quietly lead you in the wrong direction.

Most benchmarks assume one thing:

More usage equals more value.

That assumption might hold for SaaS products. It doesn’t hold for banking.

Your customers don’t open a banking app to “engage.” They open it to complete a task, build confidence, and move on.

That means:

If you apply generic frequency benchmarks, you end up flagging the wrong customers and missing the real adoption gaps.

When you look at usage without intent, you measure motion not progress.

Most benchmark reports rely on averages:

Averages feel safe. But in banking, they hide the very signals you need to act on.

Inside your “average” numbers, you often have:

When your dashboard says “within benchmark range,” the real question is:

Which customers are pulling that average up - and which ones are quietly falling behind?

If benchmarks don’t help you see that difference, they don’t protect adoption. They mask risk.

Another mistake generic benchmarks push you toward:

Treating all features equally.

In reality, you already know this isn’t true.

Checking balances, setting up bill pay, managing cards, resolving disputes, or using budgeting tools all require different levels of effort, trust, and confidence.

When you compare them using a single adoption benchmark:

This is how banks end up celebrating surface activity while deeper value drivers remain underused.

If your benchmarks don’t reflect feature complexity and customer effort, they push your teams in the wrong direction.

The biggest risk of generic benchmarks isn’t that they show poor performance.

It’s the fact that they make you feel comfortable when you shouldn’t be.

They tell you:

Meanwhile:

When benchmarks don’t surface behavioral drift, you find out too late after outcomes move, not before.

If benchmarks are going to help you drive adoption, they must:

Benchmarks should guide action not just provide reassurance.

That’s why leading banking teams are moving away from generic adoption percentages and toward behavior-anchored product usage benchmarks.

And that’s exactly what we’ll define next.

You’re tracking product usage more closely than ever. You have benchmarks, dashboards, and trend lines; yet uncertainty remains.

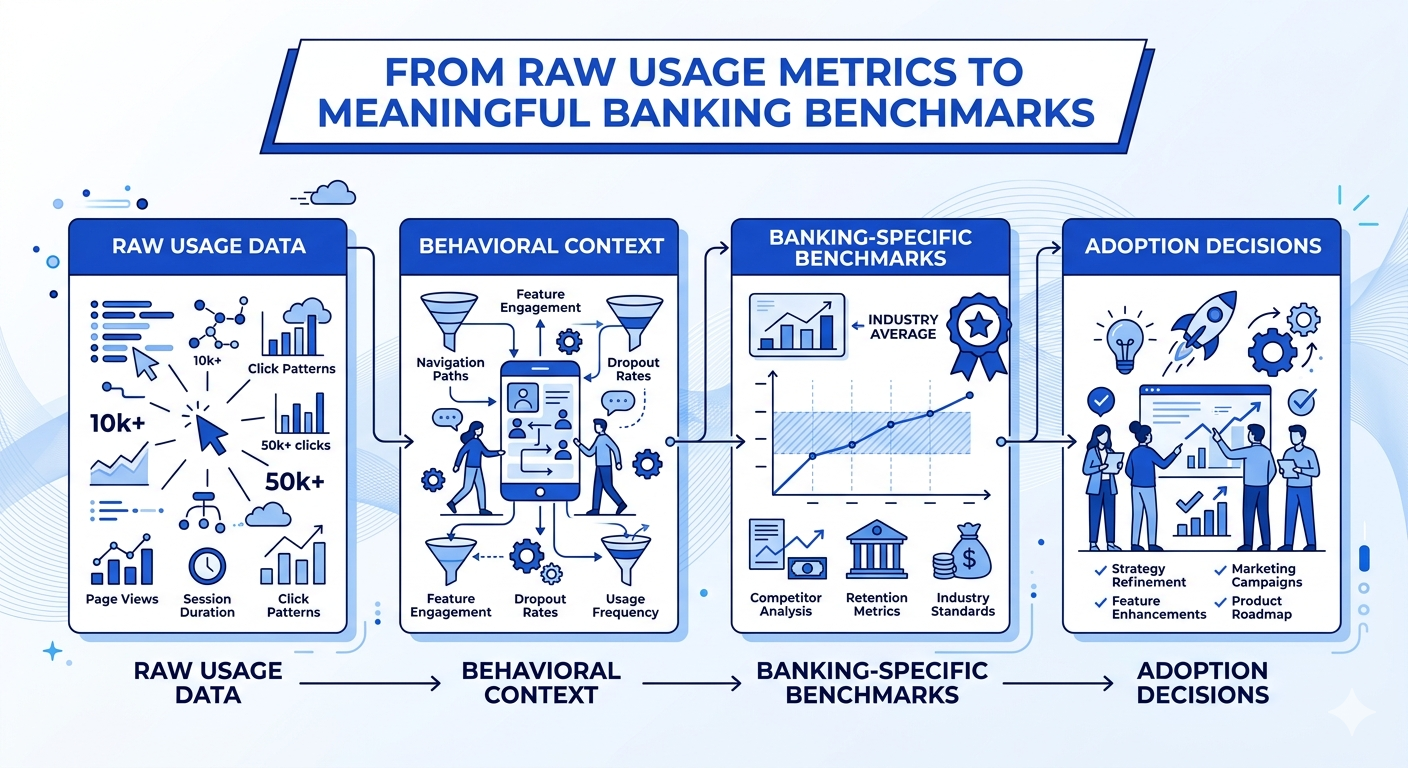

That’s because usage benchmarks without behavioral context create false confidence. In retail banking, numbers alone rarely explain whether customers are actually realizing value.

Most usage benchmarks tell you what is happening:

But they don’t tell you:

A benchmark might look reasonable, even strong; while masking shallow or fragile adoption.

In retail banking, this gap is amplified because:

This is why banks often discover adoption problems after value erosion has already set in.

Averages feel safe. They’re easy to report and easy to defend.

But in banking, averages hide risk.

You might see:

And still miss the fact that:

When teams rely on averages:

The result is benchmark complacency - numbers look fine, but adoption outcomes don’t improve.

This is exactly why the research emphasizes segment-aware interpretation, not single-point benchmarks.

Usage only matters if it maps to customer intent and value realization.

Customers don’t log in to be counted.

They log in to get something done:

This is where benchmarks shift from descriptive to strategic.

As Lincoln Murphy, Customer Success Strategy Expert, notes:

“You can focus on adoption, retention, expansion, or advocacy — or you can focus on the customer’s desired outcome and get all of those things.”

— Lincoln Murphy

For banking teams, this means:

A feature adoption rate isn’t meaningful unless you know:

High-maturity banks no longer ask:

“Are we above or below benchmark?”

They ask:

“What does this benchmark tell us about adoption health?”

They help you:

This is the difference between benchmark reporting and benchmark intelligence.

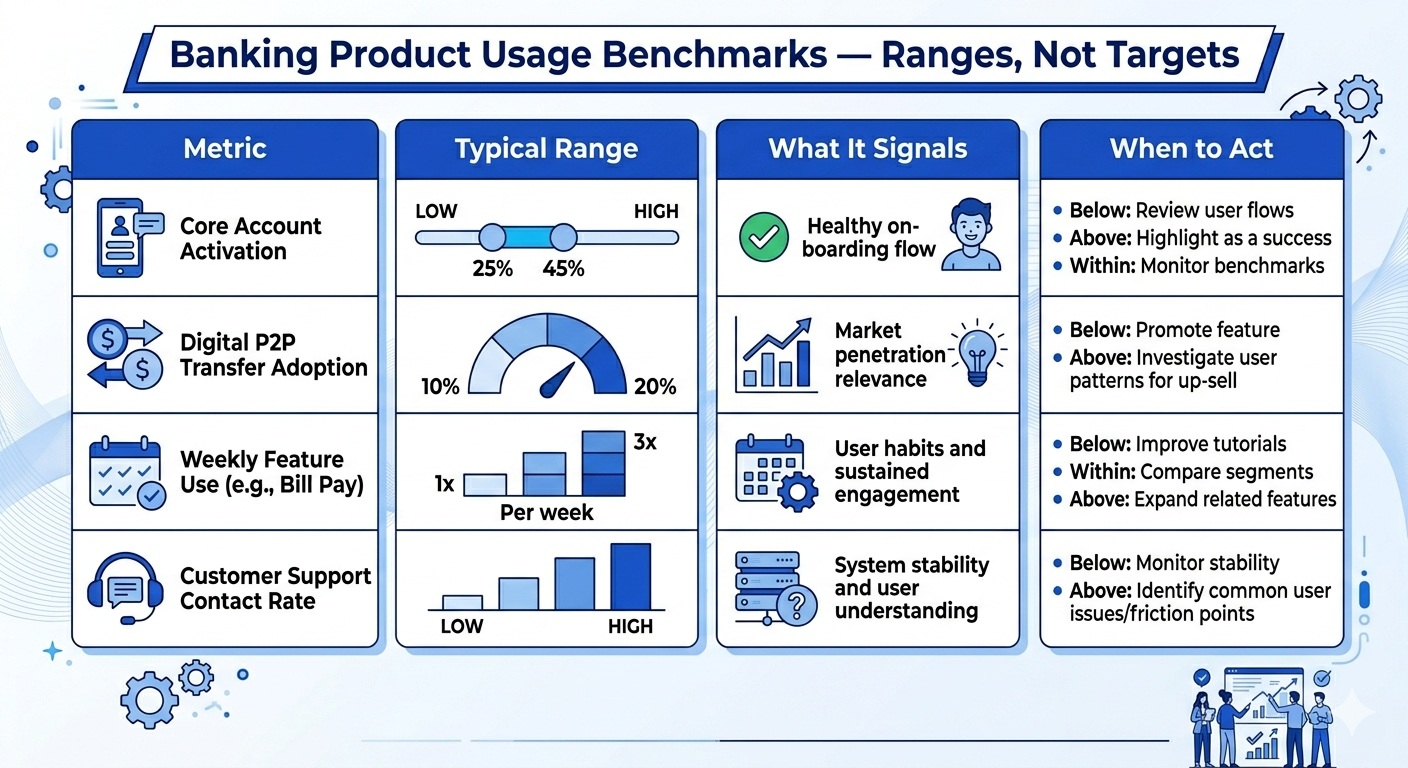

Before looking at specific usage benchmarks, one principle matters:

Benchmarks are not targets. They are signals.

Without context:

In the next section, we’ll break down banking product usage benchmarks by category - not as “good vs bad” numbers, but as ranges with interpretation guidance your CX, Product, and Ops teams can actually use.

Once you accept that benchmarks are signals - not goals; the next step is knowing which benchmarks actually matter in retail banking and how to interpret them correctly.

The mistake most teams make is copying “industry averages” without context. In banking, usage benchmarks must be read through:

Below are the most reliable product usage benchmarks used across retail banking; especially across North America, framed as interpretive ranges, not pass-fail scores.

What it measures

The percentage of customers who complete at least one meaningful digital action after onboarding (not just account creation or login).

Typical benchmark range

60–75% within the first 30 days

What teams often miss

High activation without follow-through often creates false confidence. Activation should always be paired with time-to-value and repeat usage benchmarks.

What it measures

The percentage of digitally onboarded customers who perform at least one core action in a given month (e.g., transfers, bill pay, card management).

Typical benchmark range

45–65% MAU

Important nuance

A customer logging in to “check balance” once a month counts as MAU - but may not represent real adoption. This is why MAU must be read alongside feature depth.

What it measures

How many value-driving features a customer actively uses - not just whether they log in.

Typical benchmark range

2–3 features per active customer

Why this matters

Research consistently shows that customers using multiple features are significantly more resilient to pricing changes, friction, and competitive offers.

What it measures

The percentage of active users who engage weekly with at least one digital banking capability.

Typical benchmark range

20–35% weekly active users

Key insight

Weekly usage is one of the strongest leading indicators of long-term retention - far more predictive than satisfaction scores.

What it measures

How long it takes a customer to complete their first meaningful, value-realizing action.

Typical benchmark range

3–7 days post-onboarding

Why this matters

The longer value is delayed, the more likely customers are to disengage quietly - without ever signaling dissatisfaction.

What it measures

Where customers start but fail to complete key digital workflows.

Typical benchmark rang25–40% drop-off on non-core or complex features

Critical reminder

Drop-off is not failure - it’s diagnostic data. It tells you where adoption breaks, not that it broke.

There is no universal “good” benchmark. A healthy benchmark profile looks like this:

If your benchmarks show stability without progression, adoption is likely stalling - even if numbers look “within range.”

High-performing banking teams never look at a single metric alone. They ask:

Benchmarks are not verdicts. They are signals that tell you where to look next.

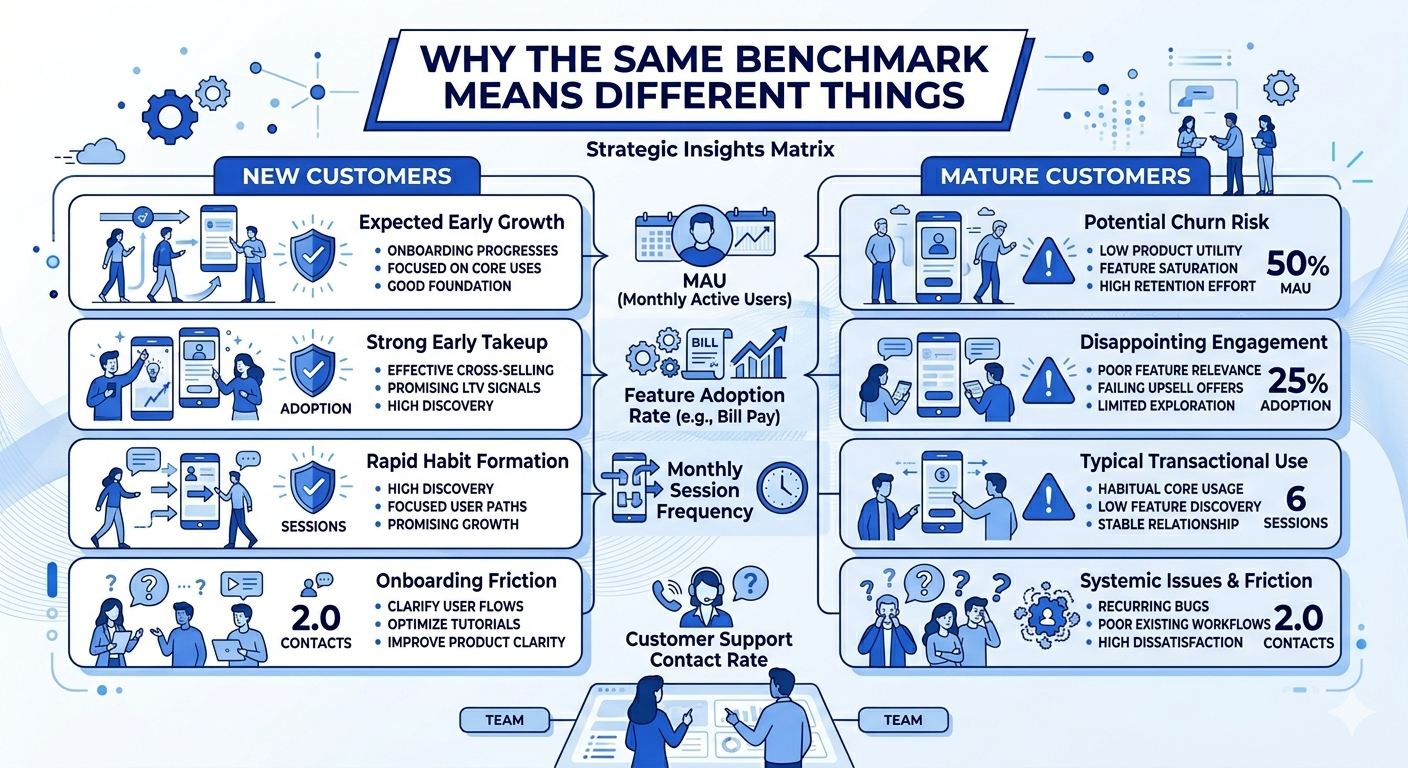

Benchmarks become dangerous when they’re treated as averages.

In retail banking, the same usage number can mean very different things depending on:

If you’re asking, “Is this adoption rate good?”

The more useful question is:

“Good for whom and at what stage?”

This is where many banks misread their own data.

When you look at usage benchmarks in aggregate, they smooth out the very signals you need to act on.

For example:

From a distance, everything looks stable. Up close, adoption may already be breaking.

Aggregate benchmarks answer:

“How are we doing on average?”

But adoption teams need answers to:

“Where is adoption improving, stalling, or quietly deteriorating?”

That requires segment-level interpretation.

Let’s take activation rate as an example.

For new customers (0–30 days):

For established customers (90+ days):

Same number but a completely different implication.

If you don’t separate these, you’ll either:

Feature adoption is one of the most commonly misread benchmarks in banking.

A 40% feature adoption rate could mean:

When reviewing feature benchmarks, ask:

A “low” benchmark may actually be healthy - and a “high” benchmark may still indicate shallow value realization.

Weekly or monthly usage benchmarks only make sense when aligned to customer intent.

For example:

Many teams treat lower frequency as disengagement.

In reality:

Usage frequency must be interpreted relative to:

Once benchmarks are sliced by segment, patterns become actionable. For example, you may discover:

These insights don’t appear in topline numbers.

At this level, benchmarks stop being reports and start answering:

That’s how benchmarks guide decisions; not just performance reviews.

Without lifecycle context, teams often panic unnecessarily.

Examples:

These are not always negative signals.

Instead of:

“Why is usage no longer growing?”

Ask:

“Is usage evolving the way it should for this segment at this stage?”

Growth, stabilization, and even decline can all be healthy signals - if they align with expected customer progression.

Banks that use benchmarks effectively do three things consistently:

They don’t ask:

“Are we above the industry average?”

They ask:

“Is adoption improving where it should — and breaking where it shouldn’t?”

That’s the difference between benchmarking for reporting

and benchmarking for adoption performance.

Most banks don’t get benchmarks wrong because the numbers are bad.

They get them wrong because the questions behind them are incomplete.

When usage benchmarks are misused, they don’t just fail to help - they actively delay action, mask risk, and create false confidence.

Below are the most common mistakes we see across U.S. retail banking teams when benchmarking product usage - and why they matter.

One of the most damaging mistakes is using benchmarks as goals.

You’ll often hear:

The problem?

Benchmarks are reference points, not objectives.

When benchmarks become targets:

High-maturity teams use benchmarks to ask:

“What does this signal tell us about adoption health?”

Not:

“How do we hit this number faster?”

Another common error is benchmarking against banks that:

A national bank with advanced self-service workflows will not show the same usage patterns as:

You end up with conclusions like:

Benchmarks only work when peer context matches product role, customer intent, and operating model.

Usage benchmarks are often reviewed in isolation:

This creates dangerously incomplete narratives.

Adoption is not one behavior.

It’s a progression.

A customer can:

And still look “healthy” in topline benchmarks.

High-performing teams always review benchmarks in clusters, not individually.

Many dashboards focus on:

But adoption risk shows up first in movement, not position.

If you only look at static comparisons, you’ll miss:

A bank slightly above benchmark but trending downward is in more danger than one below benchmark but improving.

Direction matters more than rank.

This is one of the most common — and costly — mistakes.

Teams apply:

Across:

You either:

Benchmarks only become actionable when they are segment-specific and lifecycle-aware.

Finally, many banks treat benchmarks as:

Instead of operational inputs.

When benchmarks aren’t tied to decisions:

Benchmarks should answer:

“What should we do differently this week?”

If they don’t, they’re informational — not strategic.

Banks that extract real value from benchmarks:

They don’t ask:

“Are we above average?”

They ask:

“Where is adoption quietly breaking — and how early can we fix it?”

That shift is what turns benchmarks into leverage.

This is the question every CX, Product, and Strategy leader eventually asks:

“So what’s a good product usage rate in banking?”

And the honest answer is:

There is no single “good” number.

Not because benchmarks are unreliable - but because healthy usage looks different depending on product role, customer intent, and lifecycle stage.

High-performing banks don’t chase one adoption rate. They recognize patterns of healthy behavior and benchmark against those patterns.

Let’s break down what “good” really means in practical, banking-specific terms.

Unlike SaaS or consumer apps, banking products are:

That means:

Instead of:

“Is our usage above benchmark?”

High-maturity teams ask:

“Is usage aligned with how this product is supposed to create value?”

That reframing changes everything.

The most reliable way to interpret benchmarks is to anchor them to product role.

Below are benchmark patterns, not targets, based on how products function in retail banking.

(e.g., checking accounts, transaction monitoring, card controls)

Healthy usage pattern looks like:

Warning signs:

Here, “good” usage is consistency, not intensity.

(e.g., bill pay, transfers, statements, budgeting tools)

Healthy usage pattern looks like:

Warning signs:

In this category, “good” means reliable return, not daily activity.

(e.g., onboarding flows, lending, account setup, disputes)

Healthy usage pattern looks like:

Warning signs:

Here, “good” is measured in time-to-value, not frequency.

One of the biggest benchmark traps is relying on averages.

When you look at:

You lose visibility into distribution.

A “healthy” average can hide:

High-performing teams always ask:

Benchmarks only become meaningful when paired with segment-level analysis.

From an executive perspective, “good” usage should answer three questions clearly:

Are more customers:

Is digital usage:

Can you:

If usage benchmarks can’t answer these, they’re incomplete; no matter how strong the numbers look.

So when you ask:

“What’s a good product usage rate in banking?”

The better answer is:

“Good usage is when customer behavior aligns with intended value — consistently, predictably, and with low friction.”

That’s the benchmark that actually matters.

Once you accept that “good usage” is contextual, the next logical question becomes:

“So what ranges should we use to judge whether usage is healthy, weak, or at risk?”

This is where many teams get stuck.

Some banks avoid benchmarks entirely, claiming “every bank is different.” Others latch onto a single number and treat it as a success or failure signal.

High-maturity teams do neither.

They use benchmark ranges as diagnostic guardrails not scorecards.

Let’s look at how to do this properly in retail banking.

In banking, precision without context is misleading.

A 45% feature adoption rate could mean:

That’s why credible usage benchmarks are always expressed as ranges, interpreted alongside:

Benchmarks answer “where should we look?”, not “are we done?”

These ranges are directional, not promises. Their value lies in how you interpret movement, not where you land on day one.

Illustrative range: 55% – 70% of onboarded customers show repeat usage within 60–90 days

How to interpret this:

What matters most:

Are customers returning on their own, or only when nudged?

Illustrative range: 35% – 55% active feature usage among digitally active customers

How to interpret this:

Key question to ask:

Are customers discovering these features naturally, or only after support or campaigns?

Illustrative range: 15% – 30% adoption within relevant customer segments

How to interpret this:

This is where segment-level benchmarks matter most. Expecting universal adoption here is a common mistake.

Illustrative range: 30% – 45% of digitally active users show weekly meaningful activity

How to interpret this:

Red flag to watch:

Customers who log in frequently but don’t complete meaningful actions.

Many teams compare themselves to:

And then stop there.

The real benchmark question is not:

“Are we above average?”

It’s:

“Are we improving where it matters — for the right customers — at the right time?”

A bank with:

is often healthier than one with superficially “strong” averages.

Instead of reporting benchmarks as static metrics, leading banks use them to:

Benchmarks become a conversation starter, not a performance verdict.

If your dashboards show usage “within benchmark range” but:

Then the benchmark isn’t helping you.

Good benchmarks don’t make you feel safe. They tell you where to act next.

At this point, one thing should be clear:

There is no single “good” usage benchmark in banking.

Any benchmark that isn’t broken down by segment, channel, and journey stage will eventually mislead you.

This is where many otherwise mature teams lose accuracy — not because their data is wrong, but because their benchmarks are too averaged to be useful.

Let’s fix that.

When you look at product usage in aggregate, different behaviors cancel each other out.

For example:

So your dashboard says:

“Overall usage is stable.”

But under the surface:

That’s why high-performing banks never benchmark usage in aggregate alone.

They benchmark movement within cohorts.

The most meaningful usage benchmarks are segment-relative, not bank-wide.

You should expect very different usage patterns across:

What to benchmark by segment:

What good looks like here:

Not that all segments hit the same numbers but that each segment is improving on its own curve.

If one segment’s usage is flat quarter over quarter while others grow, that’s not “acceptable variance.” It’s an adoption problem hiding in plain sight.

Channel-level benchmarks matter because channel choice reflects intent, not just preference.

For example:

What to benchmark by channel:

A common misread:

“Mobile usage is up adoption must be strong.”

Reality:

Mobile usage going up while assisted interactions also rise often signals digital struggle, not success.

Healthy benchmarks show:

It’s the relationship between channels that matters.

Usage benchmarks become truly powerful when aligned to journey stages, such as:

What to benchmark at each stage:

This answers questions averages never can:

High-maturity teams don’t ask:

“Is adoption good?”

They ask:

“At which stage does adoption stop improving?”

Benchmarks only become valuable when they trigger decisions.

That means defining:

For example:

This is where benchmarking stops being descriptive

and starts becoming predictive.

Leadership doesn’t need more benchmark charts.

They need clarity on:

The most effective benchmark views answer:

When benchmarks are structured this way, they:

Benchmarks don’t exist to validate success. They exist to surface risk early.

If your benchmarking doesn’t change what teams do:

In banking, the goal isn’t to “beat the benchmark.”

It’s to use benchmarks to stay ahead of adoption decay.

Benchmarks only matter if they change what your teams do next.

If usage benchmarks live only in dashboards, monthly reviews, or leadership decks, they may look impressive 1 but they won’t move adoption outcomes. In high-performing retail banks, benchmarks are not used to score performance. They’re used to prioritize action.

This section shows how CX, Product, and Operations teams should actually use banking product usage benchmarks to drive decisions that improve adoption, reduce risk, and scale value realization.

Product teams often face a familiar problem:

Too many features. Too many requests. Too little clarity on what actually matters.

Usage benchmarks cut through that noise.

When you benchmark feature usage by segment and journey stage, you can clearly see:

What this changes in practice:

Instead of asking:

“Is this feature live?”

Product teams start asking:

“Is this feature being used the way it was designed to create value?”

That’s a fundamentally different operating mindset.

CX teams are often the first to feel adoption pain — but the last to get actionable signals.

Usage benchmarks change that.

When CX teams can see:

They can move from reactive to proactive.

What this enables:

Instead of waiting for:

CX teams act on behavioral early warnings.

That’s how benchmarks become a CX advantage - not just a reporting layer.

For Operations and Finance leaders, usage benchmarks answer a different question:

Where is adoption failure creating operational drag?

When product usage underperforms, the downstream impact is real:

By benchmarking usage against healthy ranges, Ops teams can:

This reframes adoption from a CX concern into an efficiency lever.

When usage improves:

Benchmarks make that relationship visible.

At the executive level, benchmarks serve a different purpose.

They:

The most effective leadership teams use benchmarks to answer:

When everyone sees the same benchmark context:

Benchmarks become a management system, not a metric set.

Here’s the rule high-maturity banks follow:

If a benchmark doesn’t trigger a decision, it’s not finished.

Every benchmark should map to:

For example:

If that link doesn’t exist, the benchmark is informational — not operational.

You don’t need more benchmarks. You need fewer, better-framed benchmarks that help your teams answer one core question:

“Given how customers are using our products right now, what should we do next?”

When banking product usage benchmarks are:

They stop being retrospective reports.

They become forward-looking adoption signals.

And that’s where real adoption improvement starts.

Turn Usage Benchmarks Into Adoption Decisions

If you’re already tracking product usage but still debating what “good” looks like - a short strategy conversation can bring immediate clarity.

This is not a demo and not a vendor pitch.

Book a 30-Minutes Strategy Call with our CX architects.

This is specifically designed for CX leaders, Product heads, Ops leaders, and enterprise sales teams who need benchmarks that drive decisions; not just reports.

There is no single “good” adoption rate that applies to every bank or product. In retail banking, adoption must be evaluated by product type, customer segment, and lifecycle stage.

For example, a 60% adoption rate for core digital banking features may signal healthy value realization, while the same rate for advanced tools (budgeting, alerts, money management) may indicate under-adoption. Benchmarks should be used as context, not targets — the real question is whether adoption is improving, stable, or quietly declining within each segment.

Banking products operate under different conditions:

Because of this, benchmarks from SaaS or consumer apps often misrepresent health in banking. A lower usage frequency can still represent strong adoption if customers consistently realize value at the right moments.

Stability can be misleading. Flat usage trends may mean:

Benchmarks help determine whether stability reflects mature adoption or early stagnation. The key is comparing usage depth, not just frequency — and tracking whether customers expand or narrow how they use products over time.

No. Banking product usage benchmarks should be treated as illustrative ranges, not hard goals. Their purpose is to:

The most effective banks use benchmarks to ask better questions — not to enforce arbitrary thresholds.

High-maturity teams review benchmarks on a quarterly cadence, with lighter monthly monitoring for early risk signals. Benchmarks should evolve as:

Outdated benchmarks can be as dangerous as having none — especially when leadership decisions rely on them.