.png)

Are your CX surveys telling you what’s wrong or just confirming what you already know?

Direct Answer: When Should You Use Open vs Close-Ended Questions in CX Surveys?

Use close-ended questions when you need:

Use open-ended questions when you need:

The most effective CX surveys combine both, using closed-ended for signal and open-ended for diagnosis, enabling real-time decision-making.

Most CX teams believe they have a data problem. They don’t. They have a decision latency problem.

Because today, enterprises already track:

Yet:

customers still churn

drop-offs still happen

revenue leaks continue

Across most CX systems:

But nothing changes in real time. Because surveys are designed to measure, not trigger action

This creates a critical gap: You can see the problem but you can’t act on it

Most survey strategies fall into two extremes:

This leads to a structural failure: Data exists but decisions don’t improve.

This creates the central CX trade-off: depth of insight vs operational scalability.This creates the core tension: Depth vs Scale

When surveys are not designed for usability:

Insight without execution = lost revenue

As Shep Hyken explains:

“The best customer experiences are proactive, not reactive.”

But most surveys are still built primarily for post-event analysis instead of operational responsiveness.

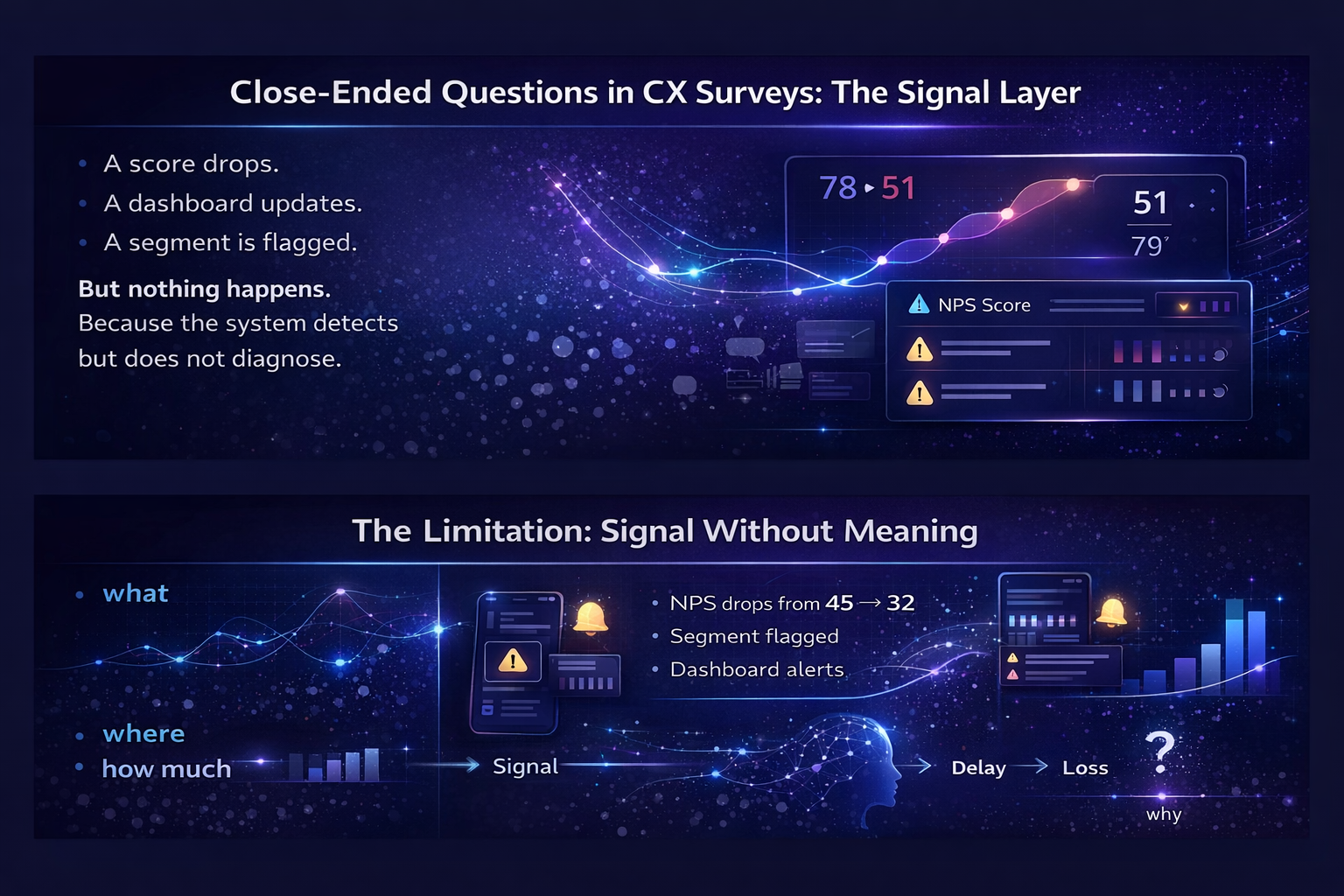

A score drops.

A dashboard updates.

A segment is flagged.

But nothing happens. Because the system detects but does not diagnose.

Close-ended questions convert experience into structured signals:

They enable measurement at scale.

Answer: What is changing?

Answer: Who is at risk?

Enable: Immediate signal detection

Closed-ended minimizes friction.

1–2% nonresponse vs ~18% open-ended

More responses = stronger signals

Closed-ended tells you:

But not:

why it happened

what to fix

Example:

Then:

Signal → Delay → Operational Friction

As Don Peppers explains:

“You can’t improve what you don’t understand at an individual level.”

Closed-ended questions:

Enable detection

Do not enable action

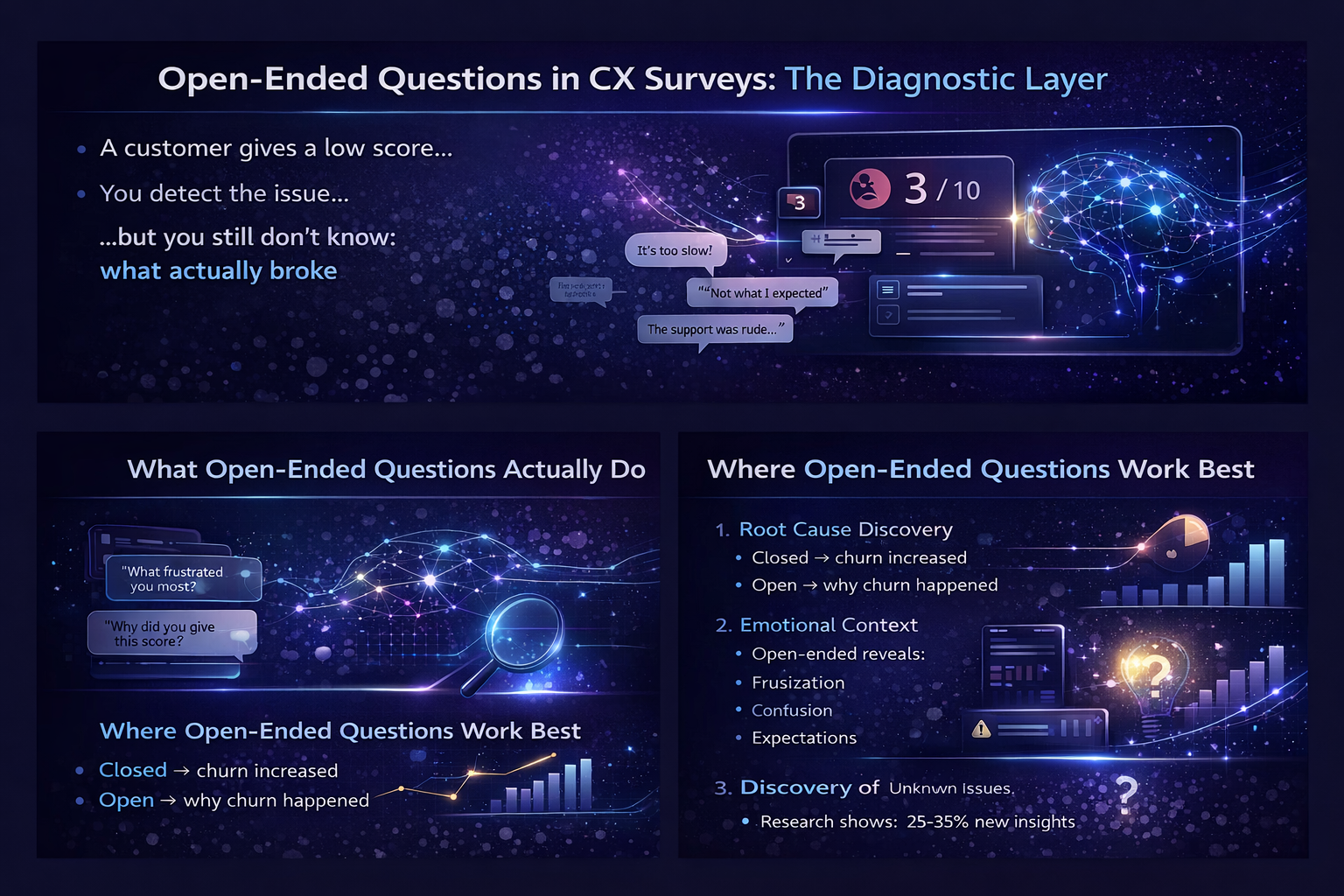

A customer gives a low score.

You detect the issue.

But you still don’t know: what actually broke

Open-ended questions capture:

Examples:

They reveal reality, not options

Closed → churn increased

Open → why churn happened

Open-ended reveals:

What scores miss

Open-ended captures: problems you didn’t anticipate

Research shows: 25–35% new insights uncovered

Open-ended introduces friction:

15–40% lower completion rates

Too many open-ended questions create: Insight without coverage

Open-ended questions are built for:

understanding

real-time execution

As Nate Silver notes:

“Data needs interpretation to be useful.”

Open-ended provides context but can slow large-scale analysis if not operationalized properly.

Open-ended questions:

Explain problems

Cannot scale alone

This is the most important principle:

Closed-ended = WHAT

Open-ended = WHY

Closed:

Open:

Used separately: incomplete

Used together: decision-ready

Most CX teams understand the theory but struggle with applying it in real situations. The difference between good and great survey design is not knowing what works, but knowing when to use it.

In always-on CX programs, where the goal is continuous monitoring, you should rely mostly on closed-ended questions because they provide consistency, comparability, and real-time tracking. However, adding one well-placed open-ended follow-up such as “What’s the reason for your score?” ensures you don’t lose the context behind the numbers.

For post-interaction moments like support calls or transactions, a hybrid approach works best. Start with a closed-ended question to capture the measurable outcome, then follow it with an open-ended question to understand the experience behind that score. These are critical “moment of truth” interactions where context directly impacts decisions.

When a customer is leaving, you are no longer measuring what you are discovering. In these cases, start with open-ended questions because you don’t yet know the real reason behind the churn. Once patterns emerge, closed-ended questions can be used later to validate and quantify those insights at scale.

In early-stage research or product discovery, the goal is not measurement but exploration. This is where open-ended questions should dominate, as they help uncover unmet needs, hidden friction, and customer language that structured questions would never capture.

For enterprise-level dashboards and executive reporting, closed-ended questions should dominate. These systems require structured, comparable data to track performance, identify trends, and trigger alerts. Open-ended data, while valuable, does not scale efficiently in this context.

The most effective survey design is not about choosing one type over the other, it's about balance. A structured mix of 70–80% closed-ended and 20–30% open-ended questions ensures you get both scale and depth without sacrificing response rates or usability

To maintain completion rates and reduce friction, it’s also recommended to limit open-ended questions to 2–4 per survey, ensuring they add value without overwhelming the respondent.

For years, open-ended feedback was considered valuable but impractical at scale. The problem wasn’t collecting it, it was making sense of it fast enough to act.

Traditionally, analyzing open-ended responses meant manual coding, slow turnaround times, and inconsistent interpretations across teams. By the time insights were ready, the opportunity to act had already passed.

Today, AI has fundamentally shifted this limitation. It can now automatically extract themes, detect sentiment, and cluster similar responses, turning unstructured text into structured insight almost instantly.

With AI in place, teams can now:

This makes open-ended data not just insightful but operational.

However, AI is not a replacement for human thinking. It is an accelerator.

It helps surface patterns and signals faster, but human judgment is still essential to interpret context, prioritize actions, and make decisions that impact real customers.

The difference between average CX teams and high-performing ones is not the amount of data they collect but how quickly they turn it into action.

Modern CX systems increasingly follow a structured execution flow designed to reduce decision latency and improve responsiveness.

Step 1: Capture Signal (Closed-Ended)

Structured metrics such as NPS, CSAT, and rating systems help organizations identify patterns, benchmark journeys, and detect operational risk earlier.

Step 2: Capture Reason (Open-Ended)

Open-ended responses provide the context behind the score, revealing friction, unmet expectations, and emotional drivers that structured data alone cannot explain.

Step 3: Translate Insight Into Visibility

AI-assisted analytics help organize large volumes of feedback into themes, trends, and operational patterns that teams can interpret faster.

Step 4: Trigger Operational Action

The most effective CX systems do not stop at reporting. They connect customer insight with workflows, ownership, escalation systems, and operational response.

Because modern CX is no longer defined by how much feedback you collect.

It is increasingly defined by how effectively organizations turn insight into action.

The goal of modern CX is not to collect better feedback.

It is to build systems where: every signal leads to a decision, and every decision leads to action

Because in today’s environment: speed of action not volume of data is what defines customer experience.

The biggest mistake in CX surveys is asking: “Which is better?”

The Right Question: Where should each be used to drive action?

What Changes When You Get This Right

problems are detected earlier

root causes become visible faster

actions happen with less delay

CX shifts from: reporting → operational response

Most CX platforms help you understand what happened.

But by the time you understand it: the customer has already left.

If your current CX system still depends on:

you are reacting too late.

Move from Insight to Immediate Action

Modern CX is no longer about collecting more feedback.

It is about:

detecting risk earlier

understanding customer friction faster

connecting insights across journeys

triggering action before outcomes are lost

With modern CXM systems, enterprises can:

identify customer friction in real time

detect journey-level drop-offs

uncover root causes faster

trigger workflow-based interventions

improve retention, conversion, and customer lifetime value

Customers don’t wait.

They:

Every delay is a lost opportunity

Book a Demo and see how modern CX systems move beyond reporting and into execution.

Experience how modern CX systems connect feedback, operational visibility, and workflow execution in real time.

Closed-ended questions provide predefined answer options (like ratings or multiple choice), making them ideal for measurement, benchmarking, and dashboards.

Open-ended questions allow customers to respond in their own words, helping uncover root causes, emotions, and deeper insights.

Closed-ended tells you what is happening

Open-ended tells you why it is happening

Neither is better alone.

The most effective CX surveys use a combination of both

This combination enables decision-ready insights instead of just data collection.

Best practice: Limit to 2–4 open-ended questions per survey

Too many open-ended questions:

A balanced approach (70–80% closed, 20–30% open) ensures both depth and scalability.

Open-ended questions require:

This increases effort, especially on mobile devices, leading to:

This is why they should be used strategically, not excessively.

AI enables:

This reduces:

And allows CX teams to: turn unstructured feedback into real-time actionable insights

Because survey design directly impacts:

Poor design leads to: insight without action

Effective design ensures: feedback → insight → action → outcome

Traditional CX systems primarily:

collect feedback

generate reports

support retrospective analysis

Modern CXM systems increasingly:

connect feedback with journey analytics

improve operational visibility

support workflow-based action

reduce response delays across teams

This shifts CX from passive reporting toward operational experience management.

The biggest mistake is asking: “Should we use open-ended or closed-ended questions?”

The correct approach is: “Where should each type be used to drive action?”

Because in modern CX:

It’s not about collecting feedback

It’s about acting on it before the customer leaves

.png)